After a month in Google's approval limbo, AutoVoice has finally been approved for use as a third-party integration in Google Home. With AutoVoice integration, you can send commands to your phone that Tasker will be able to react to, allowing you to perform countless number of automation scripts straight from your voice.

Previously, this required a convoluted workaround involving IFTTT sending commands to your device via Join, but now you can send natural language commands straight to your device. We at XDA have been awaiting this release, and now that it's here, we'll show you how to use it.

The True Power of Google Home has been Unlocked

The above video was made by the developer of AutoVoice, Joao Dias, prior to the approval of the AutoVoice integration. I am re-linking it here only to demonstrate the possibilities of this integration, which is something we can all now enjoy since Google has finally rolled out AutoVoice support for everyone. As with any Tasker plug-in, there is a bit of a learning curve involved, so even though the integration has been available since last night, many people have been confused as to how to make it work. I've been playing with this since last night and will show you how to make your own AutoVoice commands trigger through speaking with Google Home.

A request from Joao Dias, developer of AutoVoice: Please be aware that today is the first day that AutoVoice integration with Google Home is live for all users. As such, there may be some bugs that have yet to be stamped out. Rest assured that he is hard at work fixing anything he comes across before the AutoVoice/Home integration is released to the stable channel of AutoVoice in the Play Store.

Getting Started

There are a few things you need to have before you can take advantage of this new integration. The first, and most obvious requirement, is the fact that you need a Google Home device. If you don't have one yet, they are available in the Google Store among other retailers. Amazon Alexa support is pending approval as well, so if you have one of those you will have to wait before you can try out this integration.

You will need:

Once you have each of these applications installed, it's time to get to work. The first thing you will need to do is enable the AutoVoice integration in the Google Home app. Open up the Google Home app and then tap on the Remote/TV icon in the top right-hand corner. This will open up the Devices page where it lists your currently connected cast-enabled devices (including your Google Home). Tap on the three-dot menu icon to open up the settings page for your Google Home. Under "Google Assistant settings" tap on "More." Finally, under the listed Google Home integration sections, tap on "Services" to bring up the list of available third-party services. Scroll down to find "AutoVoice" in the list, and in the about page for the integration you will find the link to enable the integration.

Once you have enabled this integration, you can now start talking to AutoVoice through your Google Home! Check if it is enabled by saying either "Ok Google, ask auto voice to say hello" or "Ok Google, let me speak to auto voice." If your Google Home responds with "sure, here's auto voice" and then enters the AutoVoice command prompt, the integration is working. Now we can set up AutoVoice to recognize our commands.

Setting up AutoVoice

For the sake of this tutorial, we will make a simple Tasker script to help you locate your phone. By saying any natural variation of "find my phone", Tasker will start playing a loud beeping noise so you can quickly discern where you left your device. Of course, you can easily make this more complex by perhaps locating your device via GPS then sending yourself an e-mail with a picture taken by the camera attached to it, but the part we will focus on is simply teaching you how to get Tasker to recognize your Google Home voice commands. Using your voice, there are two ways you can issue commands to Tasker via Google Home.

The first is by speaking your command exactly as you set it up. That means there is absolutely no room for error in your command. If you, for instance, want to locate your device and you set up Tasker to recognize when you say "find my phone" then you must exactly say "find my phone" to your Google Home (without any other words spliced in or placed at the beginning or end) otherwise Tasker will fail to recognize the command. The only way around this is to come up with as many possible variations of the command as you can think of, such as "find my device", "locate my phone", "locate my device" and hope that you remember to say at least one variant of the command you set up. In other words, this first method suffers from the exact same problem as setting up Tasker integration via IFTTT: it is wildly inflexible with your language.

The second, and my preferred method, is using Natural Language. Natural Language commands allow you to speak naturally to your device, and Tasker will still be able to recognize what you are saying. For instance, if I were to say something much longer like "Ok Google, can you ask auto voice to please locate my device as soon as possible" it will still recognize my command even though I threw in the superfluous "please" and "as soon as possible" into my spoken command. This is all possible thanks to the power of API.AI, which is what AutoVoice checks your voice command against to interpret what you meant to say and return with any variables you might have set up.

Sounds great! You are probably more interested in the second option, as I was. Unfortunately, the Natural Language commands are taxing on Mr. Dias's servers so you will be required to sign up for a $0.99 per month subscription service in order to use Natural Language commands. It is a bit of a downer that this is required, but the fee is more than fair considering how low it costs and how powerful and useful it will make your Google Home.

Important: if you want to speak "natural language commands" to your Google Home device, then you will need to follow these next steps. Otherwise, skip to creating your commands below.

Setting up Natural Language Commands

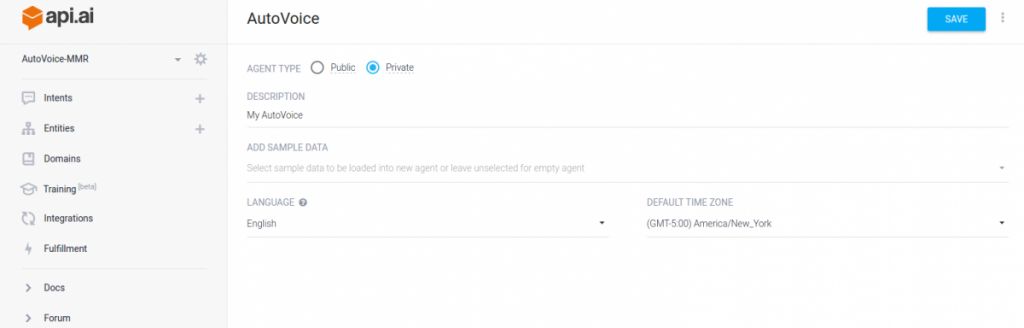

Since AutoVoice relies on API.AI for its natural language processing, we will need to set up an API.AI account. Go to the website and click "sign up free" to make a free account. Once you are in your development console, create a new agent and name it AutoVoice. Make the agent private and click save to create the agent. After you save the agent, it will appear in the left sidebar under the main API.AI logo.

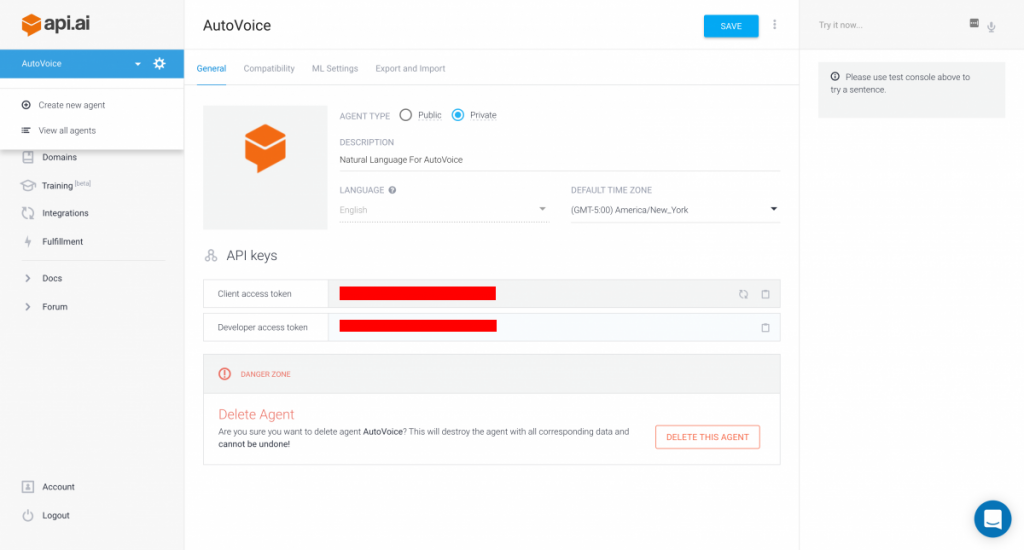

Once you have created your API.AI account, you will need to get your access tokens for AutoVoice can connect to your account. Click on the gear icon next to your newly created agent to bring up the settings page for your AutoVoice agent.

Under "API keys" you will see your client access token and your developer access token. You will need to save both. On your device, open up AutoVoice beta. Click on "Natural Language" to open up the settings page and then click on "Setup Natural Language." Now enter the two tokens into the given text boxes.

Now AutoVoice will be able to send and receive commands from API.AI. However, this functionality is restricted until you subscribe to AutoVoice. Go back to the Natural Language settings page and click on "Commands." Right now, the command list should be empty save for a single command called "Default Fallback Intent." (Note in my screenshot, I have set up a few of my own already). At the bottom, you will notice a toggle called "Use for Google Assistant/Alexa." If you enable this toggle you will be prompted to subscribe to AutoVoice. Accept the subscription if you wish to use Natural Language commands.

Creating Tasker Profiles to react to Natural Language Commands

Open up Tasker and click on the "+" button in the bottom right hand corner to create a new profile. Click on "Event" to create a new Event Context. An Event Context is a trigger that is only fired once when the context is recognized - in this case, we will be creating an Event linked to an AutoVoice Natural Language Command. In the Event category, browse to Plugin --> AutoVoice --> Natural Language.

Click on the pencil icon to enter the configuration page to create an AutoVoice Natural Language Command. Click on "Create New Command" to build an AutoVoice Command. In the dialog box that shows you, you will see a text input place to input your command as well as another text entry spot to enter the response you want Google Home to say. Type or speak the commands you want AutoVoice to recognize. While it is not required for you to list every possible variant of the command you want it to recognize, list at least a few just in case.

Pro-tip: you can create variables out of your input commands by long-pressing on one of the words. In the pop-up that shows up, you will see a "Create Variable" option alongside the usual Cut/Copy/Select/Paste options. If you select this, you will be able to pass this particular word as a variable to API.AI, which can be returned through API.AI. This can be useful for when you want Google Home to respond with variable responses.

For instance, if you build a command saying "play songs by $artist" then you can have the response return the name of the artist that is set in your variable. So you can say "play songs by Muse" or "play songs by Radiohead" under the same command, and your Google Home will respond with the same band/artist name you mentioned in your command. My tutorial below does not make use of this feature as it is reserved for more advanced use cases.

Once you are done building your command, click finished. You will see a dialog box pop up asking for what you want to name the natural language command. Name it something descriptive. By default it names the command after the first command you entered, which should be sufficient.

Next, it will ask you what action you want to set. This allows you to customize what command is send to your device, and it will be stored in %avaction. For instance, if you set the action to be "findmydevice" the text "findmydevice" will be stored in the %avaction variable. This won't serve any purpose for our tutorial, but in later tutorials where we cover more advanced commands, we will make use of this.

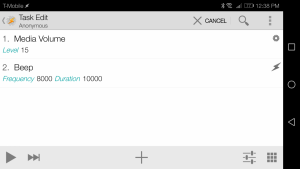

Exit out of the command creation screen by clicking on the checkmark up top, as you are now finished building and saving your natural language command. Now, we will create the Task that will fire off when the Natural Language Command is recognized. When you go back to Tasker's main screen, you will see the "new task" creation popup. Click on "new task" to create a new task. Click on the "+" icon to add your first Action to this Task. Under Audio, click on "Media Volume." Set the Level to 15. Go back to the Task editing screen and you will see your first action in the list. Now create another Action but this time click on "Alert" and select "Beep." Set the Duration to 10,000ms and set the Amplitude to 100%.

If you did the above correctly, you should have the following two Actions in the Task list.

Exit out of the Task creation screen and you are done. Now you can test your creation! Simply say "Ok Google, ask auto voice to find my phone" or any natural variation of that that comes to mind and your phone should start loudly beeping for 10 seconds. The only required thing you have to say is the trigger to make Google Home start AutoVoice - the "Ok Google, ask auto voice" or "Ok Google, let me speak to auto voice" part. Anything you say afterwards can be as freely flowing and natural as you like, the magic of API.AI makes it so that you can be flexible with your language!

Once you start creating lots of Natural Language Commands, it may be cumbersome to edit all of them from Tasker. Fortunately, you can edit them straight from the AutoVoice app. Open AutoVoice and click on "Natural Language" to bring up its settings. Under Commands, you should now see the Natural Language command we just made! If you click on it, you can edit nearly every single aspect of the command (and even set variables).

Creating Tasker Profiles to react to non-Natural Language Commands

In case you don't want to subscribe to AutoVoice, you can still create a similar command as above, but it will require you to list every possible combination of phrases you can think of to trigger the task. The biggest different between this setup is that when you are creating the Event Context you must select AutoVoice Recognized rather than AutoVoice Natural Language. You will build your command list and responses in a similar manner, but API.AI will not handle any part of parsing your spoken commands so you must be 100% accurate in speaking one of these phrases. Of course, you will still have access to editing any of these commands much like you could with Natural Language.

Otherwise, building the linked Task is the same as above. The only thing that differs is how the Task is triggered. With Natural Language, you can speak more freely. Without Natural Language, you have to be very careful how you word your command.

Conclusion

I hope you now understand how to integrate AutoVoice with Google Home. For any Tasker newbies out there, getting around the Tasker learning curve may still pose a problem. But if you have any experience with Tasker, this tutorial should serve as a nice starting point to get you to create your own Google Home commands. Alternatively, you can view Mr. Dias' tutorial in video form here.

In my limited time with the Google Home, I have come up with about a dozen fairly useful creations. In future articles, I will show you how to make some pretty cool Google Home commands such as turning on/off your PS4 by voice, reading all of your notifications, reading your last text message, and more. I won't spoil what I have in store, but I hope that this tutorial excites you for what will be coming!