Google Assistant is a very powerful tool by itself, but it gets even better when you use it with other apps and services. Today at Voice Global 2020, a conference for voice tech, Google is announcing several improvements to make it easier for developers to build new experiences with Assistant. At the forefront of this effort is the new web-based Actions Builder IDE, but that's just the start.

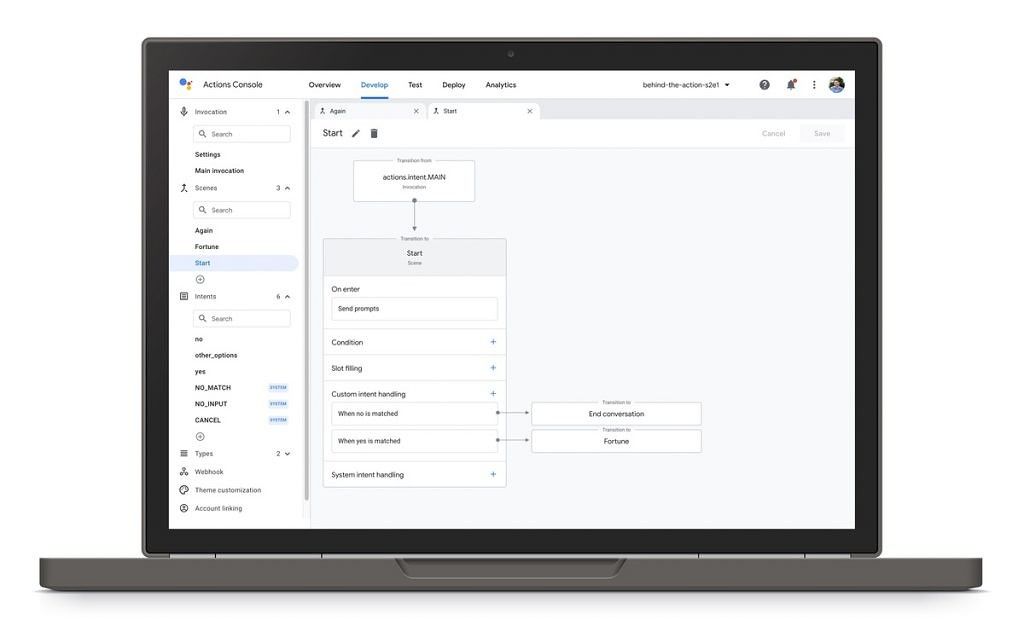

Actions Builder

Actions Builder is a new graphical interface that allows developers to visualize the conversation flow with Google Assistant. Developers can manage Natural Language Understanding training data and get advanced debugging tools. Actions Builder is integrated into the Actions Console to make it easy to build, debug, test, release, and analyze Actions in one place rather than switching back and forth between the Actions Console and Dialogflow.

Updated Actions SDK

Next up is the updated Actions SDK for developers who prefer to work with their own tools. There is now a file-based representation of the action and the ability to use a local IDE. The SDK enables local authoring of Natural Language Understanding and conversation flows but also bulk import and export of training data. The Actions SDK also includes a command-line interface for developers who prefer to work with their own source control and continuous integration tools. Lastly, a new conversation model has been introduced alongside improvements to the runtime engine in order to make it easier to design conversations and for users to get faster and more accurate responses.

Home Storage, updated Media API, and Continuous Match Mode

Another new feature is called Home Storage. This provides a communal storage solution for devices connected on the home graph. Developers can then save context for individual users, such as the last save point from a game. Updated Media APIs allow for longer-form media sessions and let users resume playback across Google Assistant devices. A user could start playback from a specific moment or resume a session, for example.

Continuous Match Mode is another new feature that allows the Google Assistant to respond immediately to commands for more fluid experiences by recognizing defined words and phrases. Google gives the example of a game currently using this called "Guess The Drawing." Continuous Match Mode allows the user to vocally guess the drawing until they get it correct. Google Assistant will announce that the microphone will remain listening temporarily so users know they can speak freely.

AMP for Smart Displays

Lastly, Google has announced that Accelerated Mobile Pages (AMP) will be coming to Google Assistant-enabled smart displays like the Nest Hub. AMP-compliant articles such as news articles will be viewable on smart displays later this summer. We're not sure how this will look on smart displays, but Google says there will be more updates in the coming months.

Google says that Assistant is used by over 500 million people every month in over 30 languages across 90 countries. Google is investing in improving Assistant so that it's more natural to use, and a large part of that effort has to involve developers and other third-parties. For example, Google's conversational Duplex AI has been used to contact businesses to update over 500,000 business listings. As another example, Google mentions how Bamboo Learning brought their education platform to the Google Assistant so families can teach their kids at home with fun lessons on math, history, and reading.