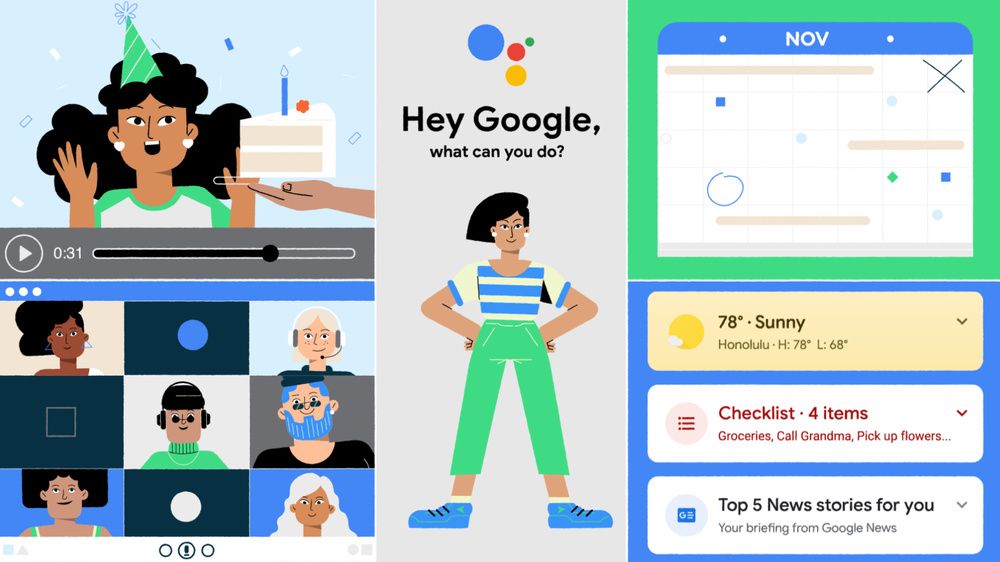

At its Assistant Developer day on Thursday, Google introduced exciting new features for Google Assistant, the company's virtual assistant service. One of the new features is deeper integration with third-party apps on Android, allowing users to open and search apps and also complete more complex tasks, including playing music, starting a run, or posting on social media.

The new feature is already available today on all Assistant-enabled Android phones, and it works with more than 30 of the top apps on Google Play, with more app support coming soon. According to Droid-Life, the current list of apps that supports shortcuts include: Citi, Dunkin, Paypal, Wayfair, Wish, Uber, Yahoo! Mail, Nike Adapt, Nike Run Club, Spotify, Postmates, Grubhub, MyFitnessPal, Mint, Discord, Walmart, Etsy, Snapchat, Twitter, YouTube, Google Maps, Instagram, Twitch, and Best Buy.

As part of the deeper integration, Google Assistant is also gaining support for shortcuts with third-party apps. “So instead of saying ‘Hey Google, tighten my shoes with Nike Adapt, you can create a shortcut to just say, ‘Hey Google, lace it.’” If that’s not a glimpse into the future, I don’t know what is.

Developers can add built-in integrations to their apps with App Actions, allowing users to search within apps and also open specific pages within those apps. "Starting today, you can use the GET_THING intent to search within apps and the OPEN_APP_FEATURE intent to open specific pages in apps," Google announced on a blog post. Developers will have the tools to implement one of the built-in intents or a custom intent; you can get started today by declaring support for one or more of these common intents in your Actions.xml file. For even deeper integration, you can create a vertical-specific built-in intent (BII) to let the Google Assistant handle the Natural Language Understanding (NLU). There are now over 60 intents across 10 verticals, including "new categories like Social, Games, Travel & Local, Productivity, Shopping and Communications," Google said.

To improve discoverability and ease-of-use, Google designed suggestions and shortcuts to help users learn about Android apps that support App Actions. To see what shortcuts are available, users can simply say, “Hey Google, shortcuts,” to set up and explore suggested shortcuts in settings. Users are required to add shortcuts to Google Assistant before using them. Google will provide shortcut suggestions proactively as a chip in Assistant search results, or alternatively, users can enable shortcuts on an app-by-app basis.

Improvements to Google Assistant on Smart Displays

Google is also improving Assistant on smart displays by introducing improved voices and trusted voices for payments. Google says there are two new voices which are designed to sound more natural. Developers will be able to use the new voices by changing the model in the Actions console. You can give one of the voices a listen right here.

As for trusted voices for payments, it’s exactly what it sounds like. Google will allow users to purchase things directly from their smart display, and because Google knows the sound of your voice, only authorized users will be allowed to initiate payments. You’ll be able to make a payment just by asking, and soon Google will introduce on-display CVC entry for an extra layer of security.

In addition, Google is also expanding Interactive Canvas to Actions in the education and storytelling verticals, introducing the Actions Testing API as a programmatic way to test critical user journeys, offering a new DialogFlow migration tool inside the Actions Console to automate moving projects to the new platform, and launching a new resource hub for game developers. To learn more about the other changes coming to Google Assistant on smart displays, visit Google's developer blog post.