Android's key attestation is the backbone of many trusted services on our smartphones, including SafetyNet, Digital Car Key, and the Identity Credential API. It's been required as a part of Android since Android 8 Oreo and was reliant on a root key installed on a device at the factory. The provisioning of these keys required utmost secrecy on the part of the manufacturer, and if a key was leaked, then that would mean the key would need to be revoked. This would result in a consumer being unable to use any of these trusted services, which would be unfortunate should there ever be a vulnerability that can expose it. Remote Key Provisioning, which will be mandated in Android 13, aims to solve that problem.

The components making up the current chain of trust on Android

Before explaining how the new system works, it's important to provide context on how the old (and still in place for a lot of devices) system works. A lot of phones nowadays use hardware-backed key attestation, which you may be familiar with as the nail in the coffin for any kind of SafetyNet bypass. There are several concepts that are important to understand for the current state of key attestation.

It is a combination of these concepts that ensures a developer can trust that a device hasn't been tampered with, and can handle sensitive information in the TEE.

Trusted Execution Environment

A Trusted Execution Environment (TEE) is a secure region on the SoC that is used for handling critical data. TEE is mandatory on devices launched with Android 8 Oreo and higher, meaning that any recent smartphone has it. Anything not within the TEE is considered "untrusted" and can only see encrypted content. For example, DRM-protected content is encrypted with keys that can only be accessed by software running on the TEE. The main processor can only see a stream of encrypted content, whereas the content can be decrypted by the TEE and then displayed to the user.

ARM Trustzone

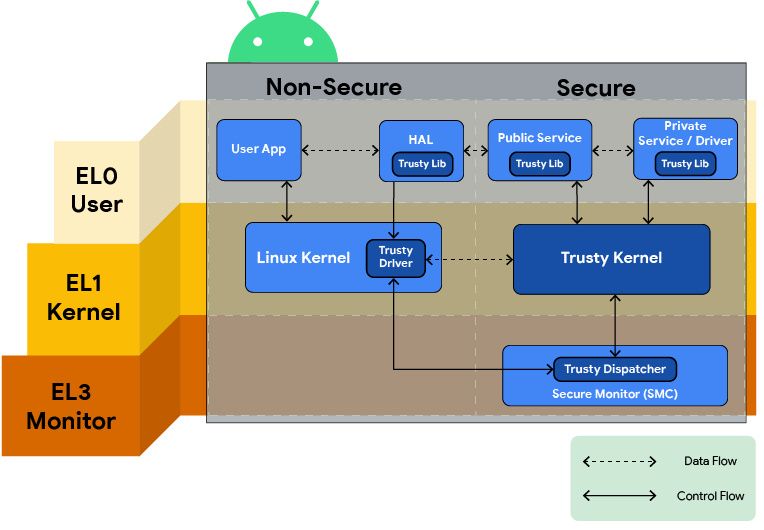

Trusty is a secure operating system that provides a TEE on Android, and on ARM systems, it makes use of ARM's Trustzone. Trusty is executed on the same processor as the primary operating system and has access to the full power of the device but is completely isolated from the rest of the phone. Trusty consists of the following:

- A small OS kernel derived from Little Kernel

- A Linux kernel driver to transfer data between the secure environment and Android

- An Android userspace library to communicate with trusted applications (that is, secure tasks/services) via the kernel driver

The advantage that it has over proprietary TEE systems is that those TEE systems can be costly, and also create instability in the Android ecosystem. Trusty is provided to partner OEMs by Google free of charge, and it's open-source. Android supports other TEE systems, but Trusty is the one that Google pushes the most.

StrongBox

StrongBox devices are completely separate, purpose-built, and certified secure CPUs. These can include embedded Secure Elements (eSE) or an on-SoC Secure Processing Unit (SPU). Google says that StrongBox is currently "strongly recommended" to come with devices launching with Android 12 (as per the Compatibility Definition Document) as it's likely to become a requirement in a future Android release. It's essentially a stricter implementation of a hardware-backed keystore and can be implemented alongside TrustZone. An example of an implementation of StrongBox is the Titan M chip in Pixel smartphones. Not many phones make use of StrongBox, and most make use of ARM's Trustzone.

Keymaster TA

Keymaster Trusted Application (TA) is the secure keymaster that manages and performs all keystore operations. It can run, for example, on ARM's TrustZone.

How Key Attestation is changing with Android 12 and Android 13

If a key is exposed on an Android smartphone, Google is required to revoke it. This poses a problem for any device that has a key injected at the factory -- any kind of leak that exposes the key would mean that users would not be able to access certain protected content. This may even include the revocation of access to services such as Google Pay, something that many people rely on. This is unfortunate for consumers because without having your phone repaired by a manufacturer, there would be no way for you to fix it yourself.

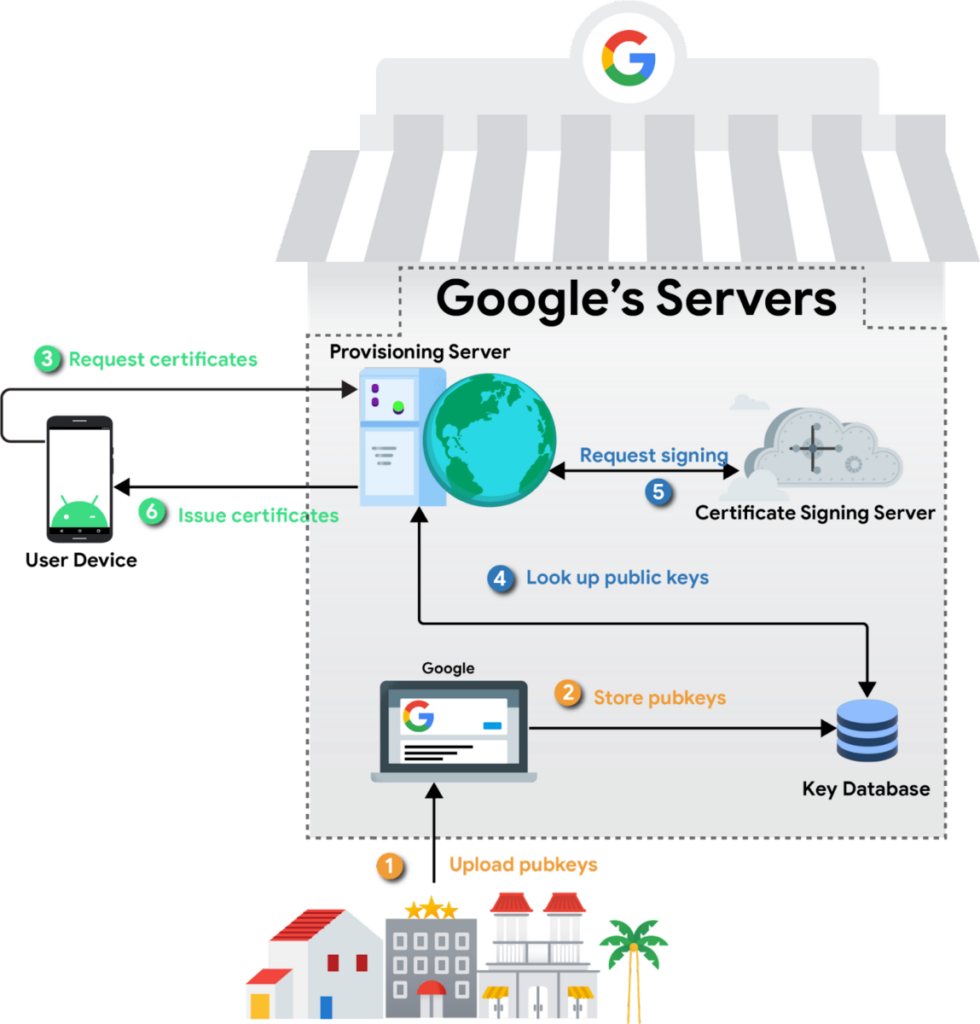

Enter Remote Key Provisioning. Starting in Android 12, Google is replacing in-factory private key provisioning with a combination of in-factory public key extraction and over-the-air certificate provisioning with short-lived certificates. This scheme will be required in Android 13, and there are a couple of benefits to it. First and foremost, it prevents OEMs and ODMs from needing to manage key secrecy in a factory. Secondly, it allows devices to be recovered should their keys be compromised, meaning that consumers won't lose access to protected services forever. Now, rather than using a certificate calculated using a key that's on the device and could be leaked through a vulnerability, a temporary certificate is requested from Google any time a service requiring attestation is used.

As for how it works, it's simple enough. A unique, static keypair is generated by each device, and the public portion of this keypair is extracted by the OEM in their factory and submitted to Google's servers. There, they will serve as the basis of trust for provisioning later. The private key never leaves the secure environment in which it is generated.

When a device is first used to connect to the internet, it will generate a certificate signing request for keys it has generated, signing it with the private key that corresponds to the public key collected in the factory. Google's backend servers will verify the authenticity of the request and then sign the public keys, returning the certificate chains. The on-device keystore will then store these certificate chains, assigning them to apps whenever an attestation is requested. This can be anything from Google Pay to Pokemon Go.

This exact certificate request chain will happen regularly upon expiration of the certificates or exhaustion of the current key supply. Each application receives a different attestation key and the keys themselves are rotated regularly, both of which ensure privacy. Additionally, Google's backend servers are segmented such that the server which verifies the device’s public key doesn't see the attached attestation keys. This means it's not possible for Google to correlate attestation keys back to the particular device that requested them.

End-users won't notice any changes, though developers need to look out for the following, according to Google.

-

Certificate Chain Structure

- Due to the nature of our new online provisioning infrastructure, the chain length is longer than it was previously and is subject to change.

-

Root of Trust

- The root of trust will eventually be updated from the current RSA key to an ECDSA key.

-

RSA Attestation Deprecation

- All keys generated and attested by KeyMint will be signed with an ECDSA key and corresponding certificate chain. Previously, asymmetric keys were signed by their corresponding algorithm.

-

Short-Lived Certificates and Attestation Keys

- Certificates provisioned to devices will generally be valid for up to two months before they expire and are rotated.

We contacted Google and asked if this has any relevance to Widevine DRM and how some Pixel users reported their DRM level being downgraded with a locked bootloader. We also asked if this can be distributed as an OTA upgrade to users via Google Play Services now. We'll be sure to update this article if we hear back. It's not clear which components of the current chain of trust will be affected or in what way.

Source: Google