Quick Links

Lossless audio is essentially just audio that's presented in its purest form, exactly as the artist intended it to be heard. Vinyl records are a great source of lossless audio, as the records themselves are nothing but analog media that capture every part of an analog wave in their grooves. The analog sound of these records sound closer to how artists sound live, and is significantly different from the digital audio that most of us listen to these days. Modern-day solutions offer a convenient way to access huge libraries of music from anywhere at any given time. But this added convenience affects the overall quality of the music.

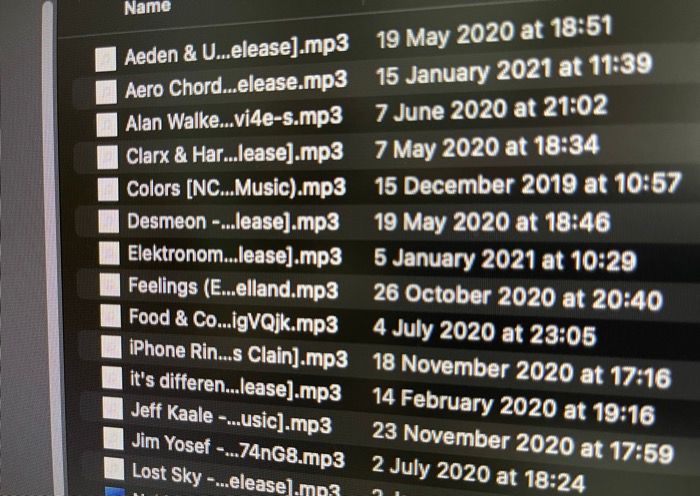

Most of the music we listen to these days is heavily compressed to save space on our devices or consume less mobile data while streaming. This compression can significantly degrade the audio quality. If you wish to listen to music in its highest quality, just the way the artist intended, lossless audio is the answer. But what is lossless audio anyway? Is it significantly superior to MP3 and other compressed audio formats?

What is audio compression?

Not as complicated as you think

Before we answer that question, it's important to learn a bit more about some (not so) commonly used terms - bitrate and sample rate.

Bitrate refers to the amount of data that's being encoded as audio every second. Data is in the form of bits, so bitrate is generally denoted in the form of kilobits per second or kbps. Sample rate, on the other hand, refers to the number of times in a second that the sound is being converted to data. Since any value per second denotes frequency, the sample rate is expressed in the form of Kilo Hertz or KHz. If you find it hard to understand what bitrate and sample rate actually mean, just know the higher the bitrate and sample rate, the better the quality of audio you will hear.

CDs, or Compact Discs, typically use an audio format built specifically for CD players and do not have a direct-to-PC equivalent. When we "rip" CD files to a computer, we often convert these audio streams to WAV or AIF, as both codecs support the same bitrate and sample rate of a CD. High-quality WAV files generally have a bitrate of 1,411kbps and a sample rate of 44.1KHz. Ideally, this is the bitrate and sample rate required to hear "lossless" audio. However, there's a caveat. A high bitrate means more data, which in turn means large file sizes. In order to reduce the size of these audio files, they have to be compressed, and that's where they lose the lossless tag.

Most audio files you download from the internet these days are generally in MP3 format. This format is popular since it's widely supported across multiple devices without any compatibility issues. However, MP3 is a compressed audio format which basically means you're losing out on some information that you may otherwise hear on a lossless audio file. The reason we say may is because even some audiophiles claim they cannot differentiate between 320kbps MP3 and a lossless format like FLAC, which we'll talk about later. Audio streaming services also use a format known as AAC which will usually sound better than MP3 at the same bitrates.

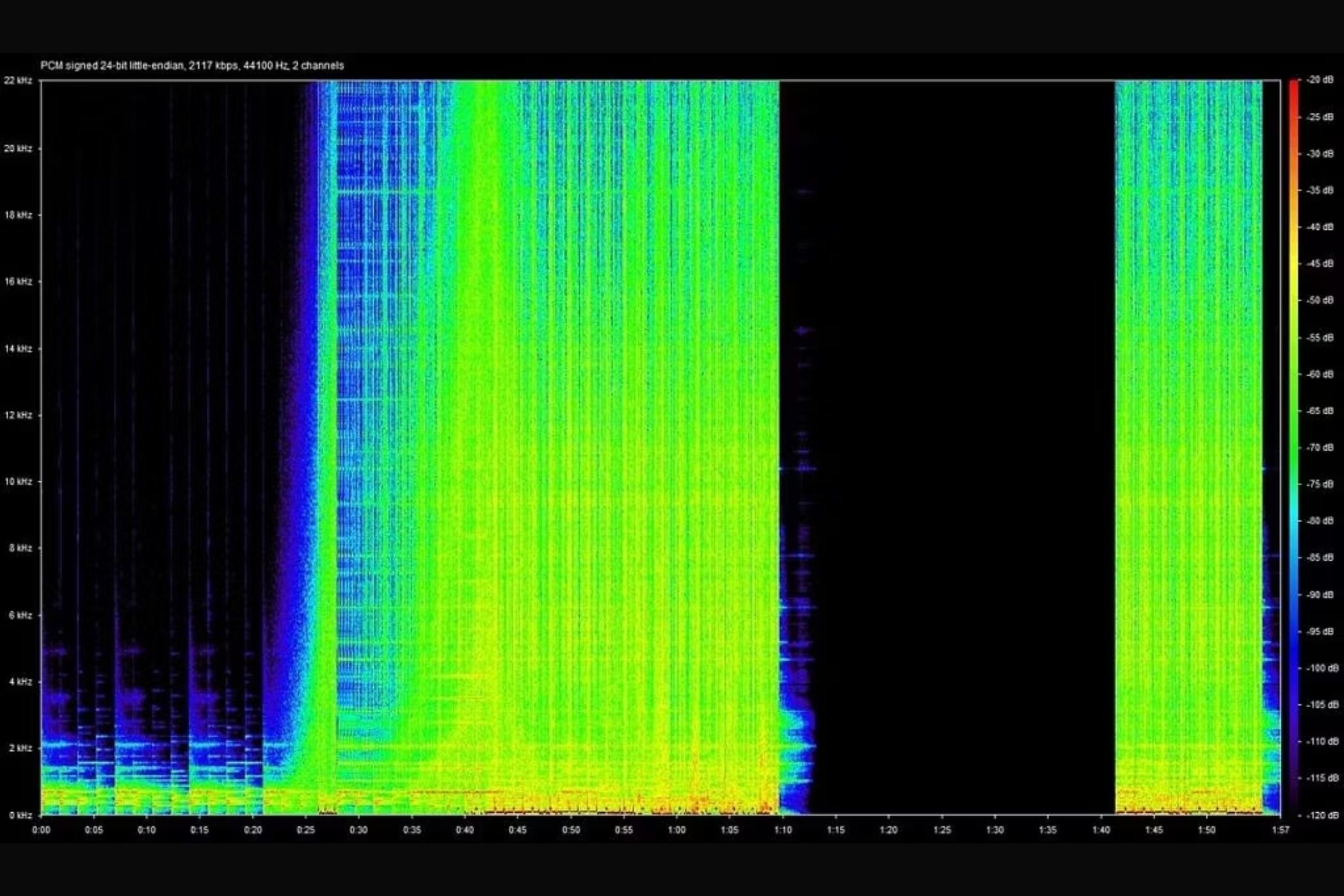

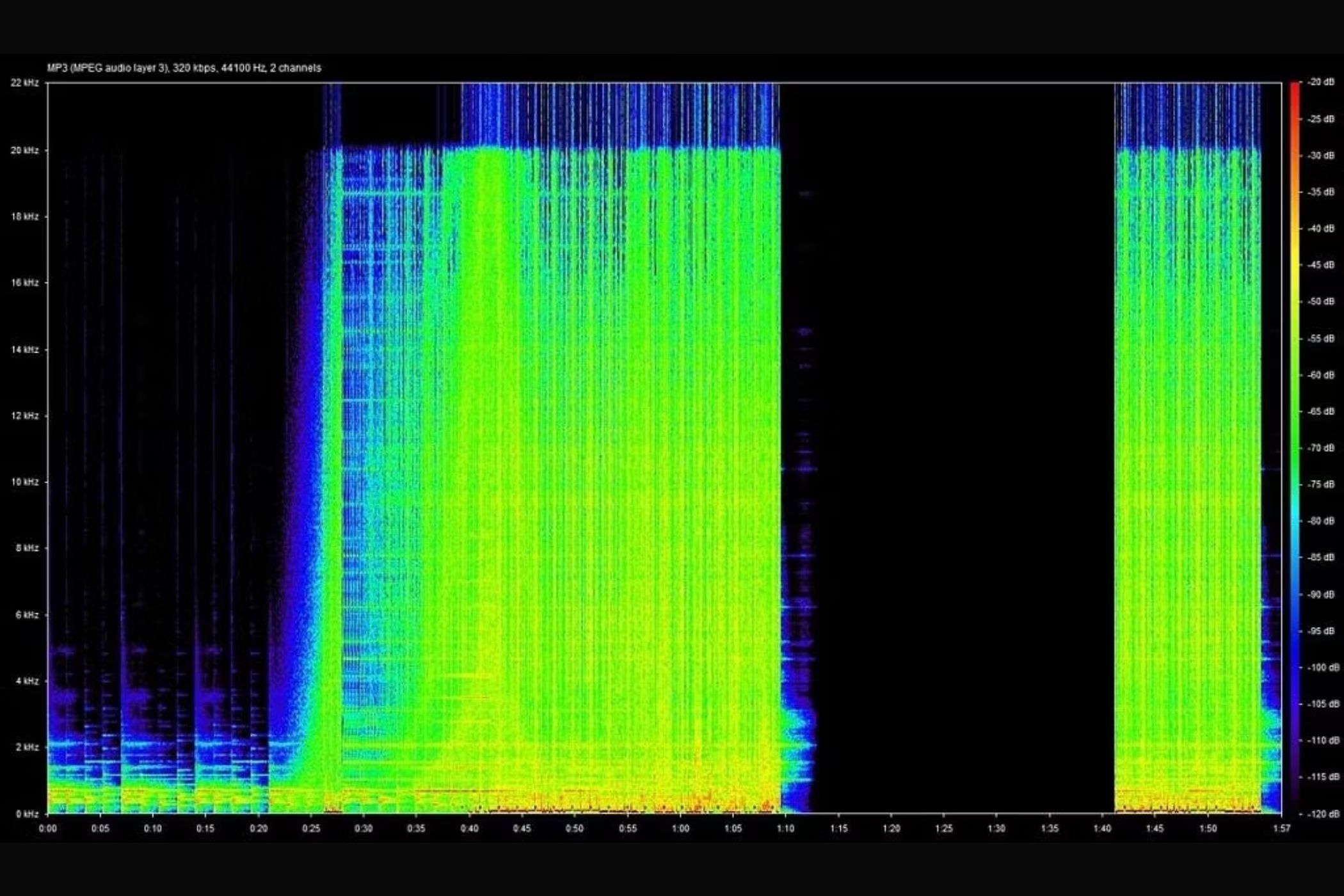

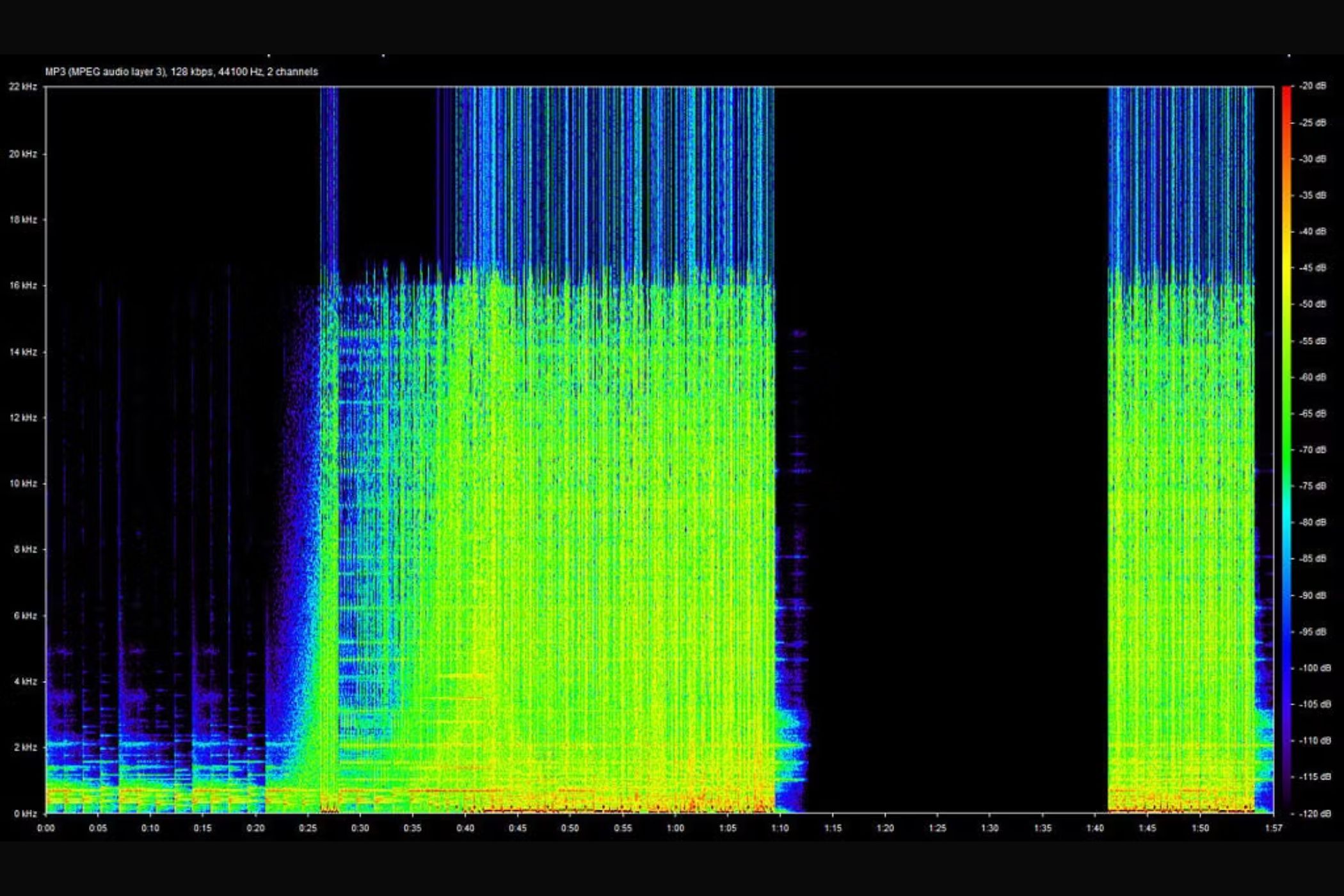

The waveforms you see above do a good job depicting the difference between WAV and MP3 audio. As you can see, the details above a certain frequency are cut off in the MP3 audio files but are retained in the WAV audio. In the 128kbps MP3 spectrogram, you can see the cut-off frequency is lower than the 320kbps MP3 file indicating the quality of the audio is lower on the audio file with a lower bitrate and bit depth. The 24-bit WAV file has a higher range of frequencies, indicating more details and better audio quality.

Difference between uncompressed and lossless audio

Not all lossless audio you hear is uncompressed

The term "lossless" is being used quite loosely ever since Apple's announcement of lossless audio coming to Apple Music on both iOS and Android. It's important to note though, that lossless audio and uncompressed audio are not the same. Uncompressed audio refers to a recorded track in its purest form without any technical intervention. This is the type of audio that has the minutest of details. Due to storage constraints, though, it's not practical to distribute this audio format, which is where compressed yet lossless audio comes into the picture. In simple terms, this is simply called lossless audio.

For some context, we mentioned that lossless audio typically has a bitrate starting at 1,411kbps. MP3 and AAC, on the other hand, can do a maximum of 320kbps, which shows how compressed these formats actually are. By now, you must have realized it's important to find a middle ground between lossless audio and compressed audio. Enter FLAC and ALAC.

Lossless audio on smartphones

FLAC and ALAC

Lossless audio on digital devices like smartphones and computers has been around for a while but hasn't been very popular due to two reasons — one, it's expensive and requires subscriptions to services like Tidal or Amazon Music HD. Two, the availability of albums in the lossless format is limited.

How do they work though? They use an audio codec known as FLAC or Free Lossless Audio Codec.

FLAC uses a compression algorithm that can maintain a high sample rate of up to 96KHz, which is even better than the WAV format we spoke about earlier while occupying just half the storage space. While most modern-day smartphones and laptops have support for FLAC, Apple developed its own codec known as ALAC, which stands for Apple Lossless Audio Codec. This is similar to FLAC, except it's compatible with iOS and macOS. If you want to transfer lossless audio to your iPhone using iTunes, this is the format you'll need.

There's one parameter we didn't mention earlier, which also plays a big part in determining the audio quality — bit depth or resolution. Bit depth indicates the number of bits of data present in each sample. A simple way to understand this would be to look at it from the perspective of videos. If you're watching a video at a lower resolution, like 480p, the amount of information or data you're going to see would be lower compared to a 1080p video. Similarly, a higher bit depth or resolution indicates better audio quality.

Both FLAC and ALAC support up to 32-bit audio, which is higher than the 16-bit resolution supported by CDs. Also, note that FLAC and ALAC are not always perfect recreations of uncompressed audio.

Apple Music lossless audio

Apple Music joined Tidal and Amazon Music HD in bringing lossless audio streaming to the masses in July 2021. The biggest difference between Apple Music providing lossless audio from other platforms is the entire collection of songs present on Apple Music is in a lossless format — and not just a select few albums. Best of all, Apple doesn't charge extra for the lossless option. This is big news because Apple generally sets the trend for other brands to follow. If Apple is offering lossless audio at no additional cost, consumers who use other streaming services will be tempted to switch over to Apple Music.

Spotify has announced they're also working on bringing lossless audio to the platform, but the company has yet to provide a concrete release date. As it stands, Apple Music is the easiest and cheapest way to listen to all your favorite tracks in lossless glory. Of course, you'll need relevant hardware to experience it, which you can find in our best accessories to experience lossless audio article that lists all the different parts you will need to listen to lossless audio.

Lossless audio vs compressed audio

You'll struggle to tell the difference

For an average user, the difference between compressed audio and lossless audio may not be as apparent. Even audiophiles struggle to tell the difference apart between a 320kbps MP3 versus a FLAC file at times. A simple way to check if you'll actually be able to tell the difference between compressed and lossless audio is by going to the voice recorder app on your smartphone and recording the same audio in two different formats.

On an iPhone, for example, navigating to Settings > Voice Memos will reveal an option called Audio Quality. Set it to Compressed first, and then head over to the Voice Memos app and record a short clip. Head back into Settings and now change the Quality to Lossless. Record another clip. Playback both clips in succession to see if you can hear a difference.

That's more or less the difference you'll notice when playing compressed audio and lossless audio. Note that it's better to use a pair of high-quality earphones or headphones to listen to this bit since the difference would be more apparent. Alternatively, you can also visit this site and run the test with your headphones on and see if you can tell the difference between lossless and lossy audio.

However, high bitrate doesn't even matter if you don't have the right hardware to make use of it.

How to experience lossless audio on your Android/iOS smartphone

You'll need a couple of accessories

You've understood what lossless audio, FLAC, ALAC, and all the other jargon mean, but how do you experience it? Well, we wish the answer was straightforward.

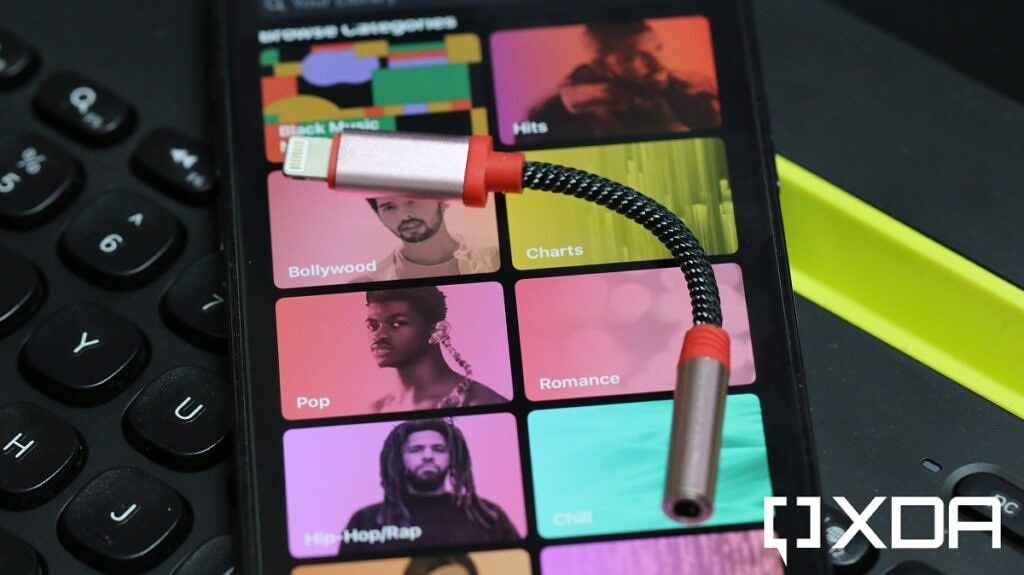

Soon after the announcement of lossless audio on Apple Music was made, Apple also put out a statement that lossless audio won't work on the AirPods Pro or even the super-expensive AirPods Max. In fact, even if you have the best smartphone and the best pair of truly wireless earphones, you'll still not be able to experience lossless audio on Apple Music.

The reason for that is that Bluetooth codecs just can't match the bitrate of a lossless audio file. Apple uses AAC to transmit audio via Bluetooth and has a capped bitrate of 256kbps. While Android phones and some wireless earphones have support for aptX HD, the bitrate with that codec (576 kbps) also doesn't come close to ALAC. Sony's LDAC comes closest to the 1,411kbps mark required to achieve lossless audio (32-bit/96kHz at 990kbps), but the iPhone doesn't support it, and there are very few earphones out there that support the codec.

The crux of the matter is Bluetooth audio codecs don't support the bandwidth necessary for transmitting lossless audio files without compressing them.

The best way to experience lossless audio on your iPhone is to use a lightning to 3.5mm DAC along with a good pair of earphones or headphones. Same with an Android device as well, except you would need a Type-C to 3.5mm adapter. Some Android phones do come with an in-built HiFi DAC, and those should be able to transmit lossless audio directly via the headphone jack or USB-C port. While every phone technically has a built-in DAC, it may not be capable of pushing lossless audio. Not many phones are fitted with a high-quality DAC these days like the now-obsolete LG phones did, so you might want to invest in a good external DAC that connects via USB. The same solution can be used on a computer or laptop as well.

Note that Apple Music lossless will come in two tiers - The lossless tier is 16-bit/48kHz with a bitrate of 1411kbps. This is the tier that will be accessible if you use Apple's lightning DAC. There's also a Hi-res lossless tier that lets you experience 24-bit/192KHz audio, for which you'll need a better DAC.

Thus, to really enjoy lossless audio, you need three parts working together:

- A device with a built-in DAC or a device and external DAC.

- A high-quality pair of earphones or headphones

- A file source that's lossless, i.e. a streaming service that supports lossless audio streaming.

Here are some product suggestions that would help you get started off with lossless audio on your smartphone or computer. These are just some of the recommendations you can choose from depending on what device you have.

Apple Lightning to 3.5mm headphone adapter

This is the dongle you'll need to connect your pair of wired earphones to your iPhone via the lightning port.

WKWZY 32-bit USB-C DAC

This is one of the most affordable 32-bit USB-C DACs we could find and should be good enough for most people wanting to experience lossless audio.

Xtrem Pro X1 USB-A DAC

The Xtrem Pro X1 DAC connects via USB-A and offers reliable performance at an affordable price. If you just want to get started, this is an ideal option.

KZ ZSN Pro IEM

These are among the most popular IEMs you can find on the market right now. We recommend them for their audio quality and affordable price tag.

If you are looking for more options, we have a separate guide on the Audio Equipment you'll need to get started with Lossless Audio. This lists out several options for DACs, Chi-Fi, IEMs, Headphones, and more.

Should you be excited about lossless audio?

Generally speaking, lossless audio sounds better than compressed audio, but the difference will depend on the quality of your headphones as well as the audio source. While Apple Music and TIDAL have made it a lot easier to access high-resolution audio, you still need to invest in accessories such as a DAC or USB-C to 3.5mm dongle to experience the lossless audio truly. If you're a casual listener, it may not be worth the investment as the difference in sound quality may not be noticeable to you. On the other hand, audiophiles and professionals may appreciate the added depth, clarity, and richness of lossless audio. That said, audio quality is a highly subjective topic. Try out lossless music on Apple Music and compare it with Spotify and see for yourself whether you can actually tell the difference.