Digging around in AOSP is a great way to make new discoveries about Android, and this time we've come across something rather hilarious. For some time, users have reported that the technology website TheVerge.com provided slow performance on mobile devices.

Now to their credit, their website performance has improved over time in my experience. Plus, it's not as if other sites (including our own) don't have issues we can strive to work on, but nevertheless I found it quite amusing that in its official set of workload benchmarks, Google decided to use The Verge in their testing.

Android Workload Automation

Workload Automation (WA) is a framework developed by ARM for collecting performance data on Android devices by executing a suite of many repeatable workloads. Google benchmarks performance on their devices by doing many of these workload tests and collecting a summary of power usage, which they then import into a spreadsheet to see how their optimizations have improved performance over time. The company picks and chooses which apps to include in its test suite, but in general they limit themselves to most of the popular Google apps. That's the gist of how it works, but we'll show the evidence from source code and describe the test suites in more detail so you can get a better picture of what automated tests Google does to measure performance.

Within AOSP, there is a directory dedicated to the workload automation tests. The apps that are used for testing are defined in defs.sh, and generally fall under one of two categories: default, pre-installed Google App or third-party web browser. One benchmark app stands out from the rest, and it's com.BrueComputing.SunTemple/com.epicgames.ue4.GameActivity which I assume refers to the BrueBench ST Reviewer benchmark which is based on the Unreal Engine 4.

# default activities. Can dynamically generate with -g.

gmailActivity='com.google.android.gm/com.google.android.gm.ConversationListActivityGmail'

clockActivity='com.google.android.deskclock/com.android.deskclock.DeskClock'

hangoutsActivity='com.google.android.talk/com.google.android.talk.SigningInActivity'

chromeActivity='com.android.chrome/_not_used'

contactsActivity='com.google.android.contacts/com.android.contacts.activities.PeopleActivity'

youtubeActivity='com.google.android.youtube/com.google.android.apps.youtube.app.WatchWhileActivity'

cameraActivity='com.google.android.GoogleCamera/com.android.camera.CameraActivity'

playActivity='com.android.vending/com.google.android.finsky.activities.MainActivity'

feedlyActivity='com.devhd.feedly/com.devhd.feedly.Main'

photosActivity='com.google.android.apps.photos/com.google.android.apps.photos.home.HomeActivity'

mapsActivity='com.google.android.apps.maps/com.google.android.maps.MapsActivity'

calendarActivity='com.google.android.calendar/com.android.calendar.AllInOneActivity'

earthActivity='com.google.earth/com.google.earth.EarthActivity'

calculatorActivity='com.google.android.calculator/com.android.calculator2.Calculator'

calculatorLActivity='com.android.calculator2/com.android.calculator2.Calculator'

sheetsActivity='com.google.android.apps.docs.editors.sheets/com.google.android.apps.docs.app.NewMainProxyActivity'

docsActivity='com.google.android.apps.docs.editors.docs/com.google.android.apps.docs.app.NewMainProxyActivity'

operaActivity='com.opera.mini.native/com.opera.mini.android.Browser'

firefoxActivity='org.mozilla.firefox/org.mozilla.firefox.App'

suntempleActivity='com.BrueComputing.SunTemple/com.epicgames.ue4.GameActivity'

homeActivity='com.google.android.googlequicksearchbox/com.google.android.launcher.GEL'

These activities are launched via the ADB command line with the following Systrace options to measure app performance:

dflttracecategories="gfx input view am rs power sched freq idle load memreclaim"

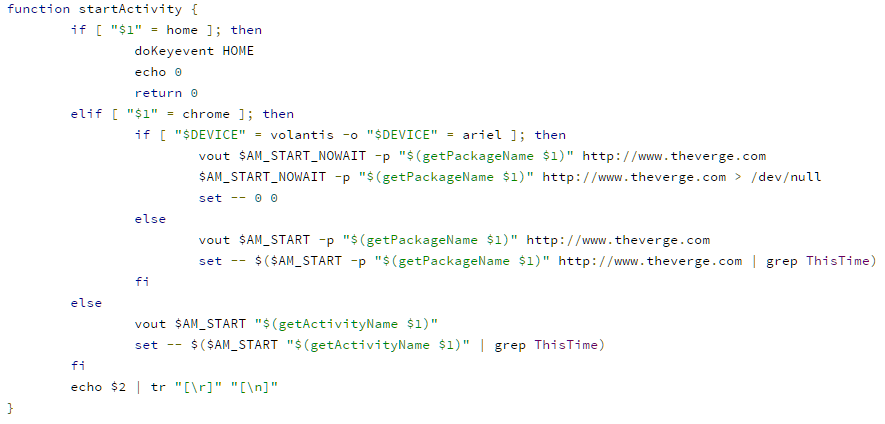

The Chrome app in particular is launched with a flag to load The Verge:

As for why the test seems to differ for the volantis (Nexus 9), I'm not exactly sure. Anyways, as for what tests this Chrome-activity-with-The-Verge actually goes through, we can determine by looking at the source code of the workload automation tests.

Test Suites

First up, there's the systemapps.sh test, which Google states works as such:

# Script to start a set of apps in order and then in each iteration

# switch the focus to each one. For each iteration, the time to start

# the app is reported as measured using atrace events and via am ThisTime.

# The output also reports if applications are restarted (eg, killed by

# LMK since previous iteration) or if there were any direct reclaim

# events.

Next, there's the recentfling.sh test, which works like this:

# Script to start a set of apps, switch to recents and fling it back and forth.

# For each iteration, Total frames and janky frames are reported.

And then there's chromefling.sh, which tests Chrome's performance rather simply:

# Script to start 3 chrome tabs, fling each of them, repeat

# For each iteration, Total frames and janky frames are reported.

Another amusing test in the Workload Automation suite, although unrelated to The Verge, is the youtube.sh performance test which measures UI jank

#

# Script to play a john oliver youtube video N times.

# For each iteration, Total frames and janky frames are reported.

#

# Options are described below.

#

iterations=10

app=youtube

searchText="last week tonight with john oliver: online harassment"

vidMinutes=15

Finally, each of these tests are used to measure real world power usage by cycling through them for a certain amount of time, as defined in pwrtest.sh:

# Script to gather perf and perf/watt data for several workloads

#

# Setup:

#

# - device connected to monsoon with USB passthrough enabled

# - network enabled (baseline will be measured and subtracted

# from results) (network needed for chrome, youtube tests)

# - the device is rebooted after each test (can be inhibited

# with "-r 0")

#

# Default behavior is to run each of the known workloads for

# 30 minutes gathering both performance and power data.

Google can then collect these data using pwrsummary.sh and import them into a spreadsheet:

# print summary of output generated by pwrtest.sh

#

# default results directories are <device>-<date>[-experiment]. By default

# match any device and the year 201*.

#

# Examples:

#

# - show output for all bullhead tests in july 2015:

# ./pwrsummary.sh -r "bh-201507*"

#

# - generate CSV file for import into spreadsheet:

# ./pwrsummary.sh -o csv

#

These are all fairly common real-world UI performance tests, not unlike the kinds you would see in our own testing. It appears that the change to load The Verge's homepage when opening Chrome was rather recent, as before last year Google would only open a new tab in Chrome during these tests. A change made in May 28th, 2015 introduced the use of The Verge when testing Chrome, however. As amusing as it is that Google is using The Verge of all places when performing Workload Automation testing, keep in mind that this doesn't mean The Verge is the worst offender out there for web performance.

Far from it, in fact, as many other webpages suffer from mediocre performance thanks to the proliferation of more and more ads to compensate for the rise of ad-blockers. Indeed, it's most likely that the decision to use The Verge was simply one out of convenience, given how tech savvy the average Googler is and the inside joke among many enthusiasts regarding The Verge's webpage performance.