Apple unveiled macOS 12 Monterey along with iOS 15, iPadOS 15, tvOS 15, and watchOS 8 during WWDC21, back in June. While they're not the biggest, most packed updates we've seen from Apple, they do include some interesting changes and features that supercharge the Mac. macOS 12 Monterey will be released later this fall, potentially along with an all-new MacBook Pro.

Below are three features we really love about this upcoming version of macOS. They're followed by three we're disappointed Apple hasn't added yet.

The Yays

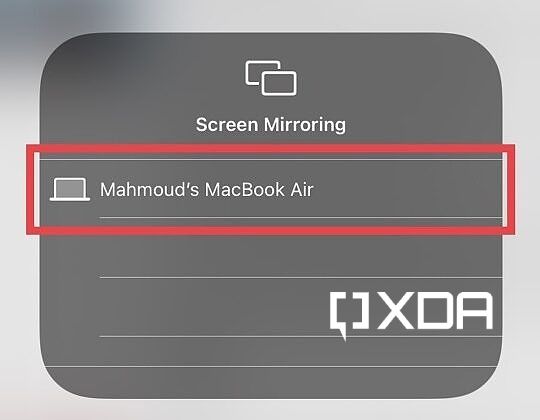

AirPlay casts itself into the Mac

Tech-savvy users have been using third-party software to mirror content from their iDevices to their Macs for years now. Whether you want to mirror your iPad's screen for a live demo, or stream a video on your iPhone through the big Mac screen, there was no easy way to do it.

As someone who has used third-party software to enable AirPlay on my desktop computer — pre-Monterey — I can assure you that using them is a pain. They're inconsistent, buggy, laggy, and of course, you have to pay for them. I could never get myself to keep any of them installed on my PC for more than a few days, if not hours.

macOS 12 Monterey saves us all the hassle (and a few bucks) of dealing with third-party AirPlay servers. It offers a seamless, lag-free experience that requires no setup at all. We've prepared for you a comprehensive guide that includes all the steps you need to do to mirror content from your iDevices to your Macs.

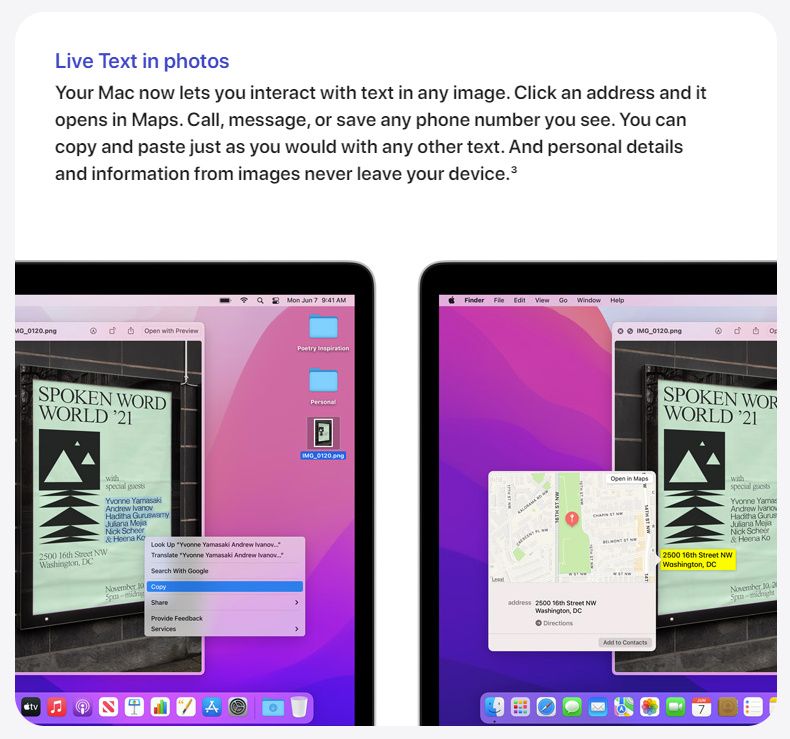

Live Text gets selected and featured

Despite it being marked as an M1-exclusive feature on Apple's website, Live Text has made it to Intel Macs in a previous macOS 12 beta. It's one of the handiest new features on macOS 12 Monterey. This feature brings OCR (Optical Character Recognition) to all supported Macs, so you no longer have to depend on third-party ones or manually type down information available in a photo.

Apple describes the feature as follows:

Your Mac now lets you interact with text in any image. Click an address and it opens in Maps. Call, message, or save any phone number you see. You can copy and paste just as you would with any other text. And personal details and information from images never leave your device.

So not only does it let you select and copy text from images, but it also allows you to take actions on the spot, such as emailing an address. This saves you from having to manually paste the email address in the Mail app. The feature works across the Photos and Messages apps, in addition to online photos viewed on Safari.

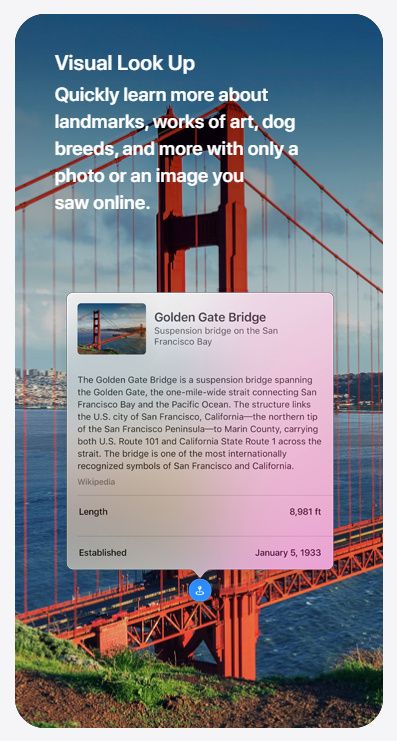

Not only does Live Text detect words, but it also can recognize breeds and types of pets, plants, books, album arts, places, and more, through Visual Lookup. It's Apple's version of Google Lens, basically.

Universal Control almost breaks the space-time continuum

Universal Control makes lives easier, and this is an understatement. Imagine you're working on a Keynote presentation on your Mac and decide you want to insert a drawing. So you take out your iPad and Apple Pencil and start doodling. When you're done, the "natural" thing to do would be to AirDrop the artwork from your iPad to your Mac, then browse your files in Finder to locate the drawing, then finally inserting it into the Keynote. Simple, right? While this method feels natural to us, there's a significantly more natural approach to doing this.

Universal Control brings cursor and drag-and-drop support to up to three Mac and iPad devices at once. Done doodling? Just bring the Mac cursor to the screen's edge towards your iPad, and the cursor will magically jump from your Mac's display to your iPad's. Drag your precious little doodle from your iPad, and take it to the edge of the screen towards your Mac. And just like that, the doodle will hop from your iPad to your Mac, where you can directly drop it into the Keynote presentation. Now that's what "natural" looks and feels like.

The Nays

What Where is the Weather?!

Apple redesigned the Weather app on iOS 15 this year. It comes with a more modern design and offers more data, such as an Air Quality Index in supported regions. However, this sleek app is still nowhere to be found on macOS (or iPadOS). macOS 12 Monterey redesigns the Weather widget to match that on iOS 15, but clicking it redirects users to weather.com. This behavior has always been the case on macOS and iPadOS.

A full-fledged Weather app is long overdue. There's nothing stopping Apple from including it at this point, especially after the introduction of Universal Apps, which make importing iOS apps to the Mac a relatively simpler process. While the weather.com website works, it's not as clean and convenient as a built-in Apple app. We will have to wait and see if Apple eventually decides to bring it to macOS and iPadOS.

Translation should transition to macOS

Apple brought the Translation app to iPads with iPadOS 15. What used to be an iPhone-exclusive app can now be used on the bigger screen, but not on the biggest — yet. The Apple Translation app supports both text and speech inputs, making it a great tool for communicating with foreigners. However, it's nowhere to be found on macOS. It's even easier for Apple to import it to the Mac because an iPad version of it already exists, so the layouts would be almost ready for macOS as is.

While Apple supports system-wide translations on macOS 12 Monterey, not having a dedicated app can make usage harder and more confusing. This is not to mention the inability to use conversation mode. And even the system-wide translations are very limited because Apple only supports about a dozen languages. Google Translate, on the contrary, supports over a hundred. It's hard to stick to Apple's services when rivals offer more accurate ones with wider platform support. And I say this as an Apple enthusiast.

Unleash the widgets already, Apple

iPadOS 15 bought the ability to place widgets on the iPad Home Screen, after users had complained about it being an iPhone-exclusive feature on iOS/iPadOS 14. The widgets on macOS, despite being the same (size and behavior-wise) as those on iOS/iPadOS, are still stuck in the Notification Center. We would love to see Apple allowing us to drag them to the desktop to view them instantly. Right now they're hidden behind a swipe or click to bring out the notification center, which defeats the purpose of "viewing information at a glance." iPadOS 14 at least allowed users to pin the widgets page to the Home Screen, making viewing them at all time possible.

In the case of macOS, we can't even pin the Notification Center to the desktop. What blows my mind is that Macs are used in landscape orientation at all times, so worrying about widget sizing and distribution when switching from landscape to portrait and vice versa isn't an issue. Apple managed to solve this issue on iPadOS 15, but for some reason widgets are still restrained on macOS 12 Monterey.

macOS 12 Monterey brings some features we had desperately been waiting for, such as turning your Mac into an AirPlay server. However, it's still lacking some features that we really would love to see Apple adding — sooner rather than later.

What is your favorite new Mac feature? Have you updated to the beta version already or are you waiting for the stable release later this fall? Let us know in the comments section below.